The tension

Hoteliers and revenue managers grew up on last-click attribution: spend on Google Ads, a guest books, divide one by the other. AI search breaks that model. A guest might ask ChatGPT for “a bike-friendly hotel in Paris,” get a recommendation, open it in a new tab, verify it on Google, check a few OTAs, then book direct three days later. No single attribution path captures that journey.

AI hotel search behaves like brand marketing, not performance. The returns are real but diffuse. Asking it to produce a clean cost-per-booking number is asking the wrong question.

The real hotel-booking flow

Based on the captures we’re running (see the source-shift study) and public data, the typical AI-influenced hotel journey looks like this:

- 1AI Search — the messy research phase

Long conversational prompts. “I’m going to Nashville with friends; foodie, music off the beaten track — what should I do?” LLMs handle the open-ended hotel-discovery work Google was never good at.

- 2Google validation — due diligence

Reviews, photos, pricing, the hotel’s direct site, a couple of OTAs for comparison. 94% of LLM users return to Google before they book. They’re not re-searching; they’re verifying.

- 3Booking — direct or OTA, brand-query triggered

The final click lands on a branded query (“hotel ranque paris”) or a direct-site visit. To standard analytics that click looks organic / direct — the AI influence is invisible.

The blind spot

Steps 1 and 2 are massively unaccounted for in hotel marketing and revenue-manager reports today. The AI layer is doing the work of discovery; Google takes the credit because it sits at the conversion step. Any hotelier looking only at GA4 source reports will under-weight AI by an order of magnitude.

Four ways to measure (imperfectly)

None of these give you a clean attribution number. Together, they triangulate a directional picture of how much of your hotel demand is AI-influenced.

Server log analysis — the only direct visibility signal

Before the visitor shows up in GA4, the LLM has already fetched your page. Every time ChatGPT, Claude, or Perplexity retrieves content to answer a user prompt, the fetch lands in your web server logs with a distinctive user-agent (ChatGPT-User, Claude-User, PerplexityBot). Gemini is the exception — its fetches ride Google’s existing crawler infrastructure and are largely indistinguishable from Googlebot in logs.

Why it’s the gold standard (except for Gemini)

Logs record actual LLM activity on your content — not simulated prompts, not self-reported surveys. Volume of ChatGPT-User hits, hit frequency per page, and which URLs get retrieved are a direct measurement of how much your site is being consulted inside real AI conversations. Tools like Oncrawl’s AI bots log analyzer slice this out of raw access logs.

This is the closest thing to an “AI rank tracker” that actually exists. Everything else is a proxy.

The chicken-and-egg problem

If you never run prompts, you can’t understand some things — you’ll miss fan-out queries, you won’t see where you rank on long-tail topics that sit outside classic SEO scope, you can’t benchmark competitors. Tracking is necessary to understand the surface.

But if you track too much, you inflate your logged numbers and add noise to the very signal tracking was meant to measure. The fix isn’t “don’t track” — it’s controlled windows, longer intervals, and clean segmentation when you do. Most mature setups I see:

- • Focus on non-web-search queries (the model answering from its own knowledge, not fetching)

- • Separate API traffic vs UI scraping (very different bot fingerprints)

- • Split by model (GPT-5.1 / 5.2 / 5.3 / 5.4 behave differently)

- • Split by provider (OpenAI / Anthropic / Perplexity / Google) — each has its own fetch pattern

The prompt-tracking trap

Daily prompt-tracking tools simulate user queries against ChatGPT / Perplexity / Claude to benchmark brand visibility. Every simulated prompt can trigger an AI-user-bot fetch against your site — injecting synthetic traffic directly into the only signal that was real. A hotel running 1,000 prompts a day can easily double its logged ChatGPT-User hits without a single real guest being involved.

There’s a convenient-for-vendors side to this. Prompt-tracking tools are SaaS products; they need active users and a “continuous view.” Running your panel daily is better for their retention than for your data hygiene. And if daily tracking nudges your log numbers up, the tool looks more valuable to the customer watching both dashboards. Not a conspiracy — just aligned incentives worth naming.

Two funky issues in competitive markets:

- • Your competitors may be running prompt audits that mention your brand, inflating your logged AI-bot traffic whether you asked for it or not. In a competitive market this gets worse as AI-search marketing spreads.

- • Always reverse-DNS the AI bots. We’ve witnessed plenty of fake AI-bot traffic — spoofed user-agents from commodity proxies pretending to be ChatGPT-User. Without reverse DNS + IP range verification, the panel is trivially poisoned.

How to keep logs clean: use prompt-tracking as an audit tool, not a daily KPI. Once a week is the practical ceiling; once a month is better. If you must run it daily, pin the runs to a controlled window (e.g. 02:00–04:00) and exclude that window from the log-analysis panel. LLM “rankings” from simulated prompts can audit coverage; logs are the only reality check.

- • Hits per day by AI user-agent

- • By model and provider

- • API vs UI scraping (split the fingerprints)

- • Top URLs fetched (rooms, FAQ, offers)

- • 4xx / 5xx rates on AI hits (breaks hurt)

- • Always reverse-DNS AI-bot IPs — spoofing is common

- • Exclude prompt-tracker windows

- • Separate bot vs user referrer

- • Prefer non-web-search focus for the baseline

- • Don’t block in robots.txt unless intended

GA4 referrer tracking — the floor, wired end-to-end

Start with what GA4 can already see. Filter sessions by referrer for chatgpt.com, perplexity.ai, claude.ai, gemini.google.com, copilot.microsoft.com. Create a custom channel group that bundles them into “AI” so every GA4 report (acquisition, engagement, conversions) breaks out that segment.

Wire it to bookings, not just sessions

The measurement only earns its keep if the booking step is connected. Tag the confirmation event (IBE / booking-engine “thank you” URL) as a GA4 conversion, with revenue where possible. Then the AI channel group gets its own conversion rate, revenue, and ADR — same fields as organic, direct, and paid. Without the booking wire, you’re measuring traffic shape, not business impact.

The number you get is a floor, not a ceiling. Most AI-influenced journeys strip the referrer somewhere between the AI surface and the booking — a copy-paste, a mobile handoff, a tab close. Graphite’s research (“AI is much bigger than you think”) argues the real AI share is several multiples of the referrer number. Treat GA4 as a trend line, not a ceiling.

One anonymised case: a 5-star Paris luxury hotel with GA4 wired to the AI custom channel group and to the booking-engine thank-you event. First-user source dimension, 15 months of data (Feb 2025 → Apr 2026). ChatGPT, Perplexity, Copilot, Gemini, Claude matched by exact source value.

Real GA4 data, single property (anonymised). AI share of new users, monthly. The inflection lands in Dec 2025 and stabilises above 3% in 2026. April 2026 is a partial month.

AI source mix on Hotel A (Feb 2025 → Apr 2026) — what GA4 sees

This is what GA4’s first-user-source dimension shows. Read with a big caveat, below — especially for Gemini.

The ChatGPT share above is over-stated — Gemini is missing

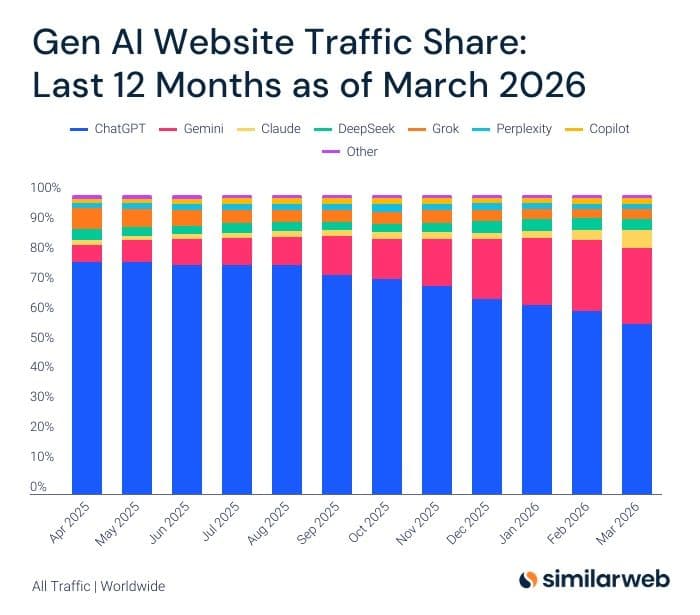

SimilarWeb’s worldwide panel of GenAI website traffic tells a very different story than our referrer table. By March 2026, ChatGPT is down to roughly 55% of Gen AI site traffic and Gemini has climbed to ~25%. On Hotel A’s GA4, Gemini is 0.5% (12 users over 15 months) — those are almost certainly us testing, not real guests.

The reason is structural: Gemini’s hotel-fetching rides Google’s infrastructure. When a Gemini user clicks through to the hotel site, the referrer often looks like google or is stripped entirely. GA4 sees it as organic / direct. The 97.4% ChatGPT share on Hotel A is GA4’s view, not the real one.

SimilarWeb, worldwide, all traffic, Apr 2025 – Mar 2026. ChatGPT share falling; Gemini rising sharply. Aggregate usage mix ≠ what GA4 first-source attributes to a hotel site.

What GA4 typically shows, once wired

Important — this is still a floor. GA4 can’t see: Gemini (rides Google’s infrastructure, shows as google), Google AI Overviews (also google), Bing Chat inside Bing (shows as bing), copy-paste of a hotel name from an AI answer (shows as (direct)), or AI-assisted Google searches that end in an organic click. The 4.94% is only the AI traffic that kept its referrer intact end-to-end. True AI influence is likely at least 2× what GA4 shows, and quite possibly more on a luxury property where the journey is long and research-heavy.

A plug-and-play Looker Studio dashboard — input your GA4 property and get the same AI-share line, engagement, and conversion breakdown out of the box. Will be linked here once published.

If paid-ad spend is changing, AI share expressed as a % of total sessions will move for reasons that have nothing to do with AI. A 20% cut in Google Ads can make the AI % look like it jumped overnight.

Track both: AI sessions as a % of non-paid traffic (organic + direct + referral only) to isolate the AI signal, and AI sessions in absolute numbers to catch additive growth — the pie is getting bigger, not just redistributing. Humans love percentages; the absolute line is the honest one.

Branded query growth — the leading indicator

If AI search is doing the hotel-discovery work, the fingerprint shows up in Google Search Console. Generic queries (“all-inclusive holiday”, “hotel with pool paris”) decline. Branded queries (“hotel ranque”, “land of legends turkey”) climb — often double-digit year-over-year — because guests have decided which hotel from the AI conversation and are Google-searching it by name.

Track: brand impressions, brand clicks, and the ratio of brand-to-generic queries on your hotel property in GSC. Rising brand share against falling generic demand is the behavioural signature of AI influence. Jay Chauhan walked through this shift in a recent talk — generic travel keywords down, branded hotel names double-digit up.

GSC fan-outs are noisy — same playbook as 2010s rank trackers

ChatGPT’s web search issues fan-out queries — multiple sub-queries behind a single user prompt — and those land as impressions in Google Search Console. Long-tail (10+ keyword) impressions get the most pollution because prompt-tracking tools replay the same fan-outs every day. This isn’t new: SEO rank trackers pumped GSC impressions the same way when they appeared. The AI version is just louder — one prompt fans out into dozens of queries. Treat long-tail GSC numbers as directional, audit cadence only, and pin branded queries (which fan-outs don’t inflate) as the trustworthy signal.

Pre- and post-booking qualitative form — the closest thing to AI revenue attribution

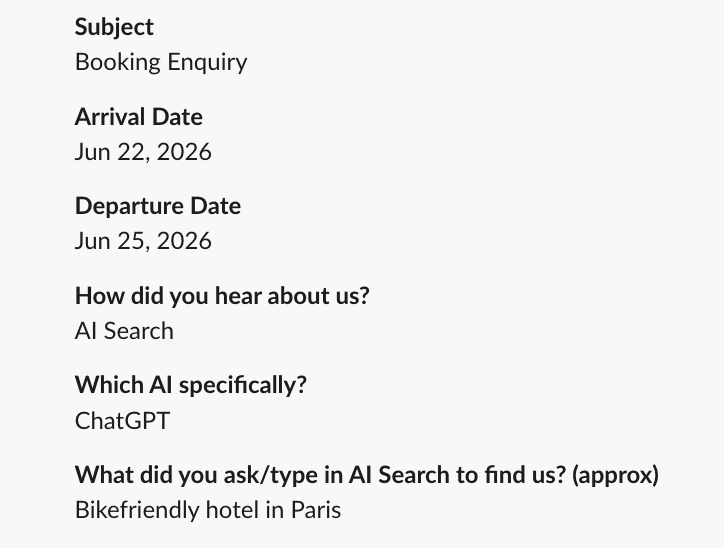

Of the five methods on this page, this is the only one that ties an AI source to an actual revenue-bearing event. Logs measure bot fetches. GA4 referrers break the second a guest copy-pastes a hotel name. Branded queries are a leading indicator. The form answer is the booking itself, with the source attached. The cheapest, most direct signal: ask. Add three fields to your hotel booking enquiry or post-stay email:

- •How did you hear about us? — include “AI Search” as an option.

- •If AI, which one? — ChatGPT / Gemini / Perplexity / Claude / Copilot / Grok / other.

- •What did you ask to find us? (approx.) — the exact prompt is research gold.

Why this works when nothing else does

- •It survives the journey. Tab close, mobile-to-desktop handoff, three-day gap between the ChatGPT conversation and the booking — none of that breaks the form answer. Referrers break on all of them.

- •It binds to revenue. The answer attaches to the booking record. Filter your PMS exports by “heard about us = AI” and you have AI-attributed ADR, length of stay, channel mix, and lifetime revenue — not just session counts.

- •It catches the dark traffic. A guest who copy-pasted the hotel name from a Gemini answer (invisible in GA4) still ticks “AI Search.” The 2× floor multiplier from Method 2 starts to become measurable.

- •The prompt is research gold. “Bikefriendly hotel in Paris” tells you the schema fields, FAQ entries, and content angles to add. No prompt-tracking tool replicates that — it’s a real guest’s real query.

This is the same playbook self-serve software has run for a decade. PostHog, Linear, Notion, Loom, Vercel — every modern SaaS onboarding has a “How did you hear about us?” field in step one of signup, with a follow-up free-text. They trust it more than their analytics stack because attribution tools fail for content-led, word-of-mouth, and cross-device journeys — the exact pattern AI search now produces in hospitality. Hotels are arriving at the same answer ten years late.

Hotel Ranque wired this into their booking form in 2026. The form answer is the closest thing to a revenue-attributable AI signal we’ve seen in the industry — and unlike everything else on this page, it doesn’t degrade as the AI surfaces evolve. People will still know they used ChatGPT in 2027.

Hotel Ranque booking enquiry. Real answer: “Bikefriendly hotel in Paris” on ChatGPT.

AI channels worth watching (opportunities, not measurement)

The four methods above are how you measure AI demand. These are the surfaces where that demand actually lands — and where the next wave of measurement might come from. None of these are KPIs you can track yet; they’re channels worth taking a position on now.

In-surface bookings

Apps inside ChatGPT let guests book without leaving the chat. The Hotels Network has shipped one; others are following. Once activated for a property, conversion data lives inside the AI channel, not reconstructed after the fact. The big OTAs and chains aren’t meaningfully here yet — a small window where independent hotels can get a booking channel Booking.com doesn’t dominate. Unusual for this industry.

Paid surface + future analytics

Sponsored placements are live (see the ads study) and typically come with an advertiser dashboard. The interesting part isn’t only the spend — it’s that if OpenAI exposes topic-level prompt analytics to advertisers (the way Google Ads exposes search-term reports), buying ads becomes the cheapest way for hotels to get a first-party view of the queries their category gets served into.

Panels, extensions, partnerships

A growing set of vendors claim to aggregate real (not simulated) LLM prompt traffic. The data is still partial, and the privacy questions are non-trivial (users consenting to have their AI conversations shipped to a third party). If it works, it replaces the simulated ranking game with real impression data. Worth watching, not yet worth committing to.

Bottom line

Every number in the final hotel study will be directional, not precise. AI attribution is imperfect by design — the whole point of the AI layer is that it hides its own workings. Waiting for a clean number is waiting forever.

AI is here, and it’s here to stay. The hotels that build the measurement scaffolding now — GA4 referrers wired to bookings, branded-query dashboards, a pre-booking form, an Apps integration — will understand their AI channel a year before the hotels that are still waiting for Google Analytics to do it for them.